At the University of Southampton (UoS), our policy on Generative Artificial Intelligence is that we aim to develop our teaching, learning and assessment to ensure that education at UoS prepares students to be critically digitally literate and responsible, ethical, skilled users of Generative Artificial Intelligence (GenAI), ready for work and life in an AI-enabled world. This aligns to the HE sector’s general approach to AI as articulated in the Russell Group principles of 2023.

We understand that it will take some time for all of us to gain awareness in how AI can support our teaching and learning. Across the University we are working to develop AI literacy and support for students and staff. Technical development in AI is happening at pace, and we face current and significant opportunities and challenges.

Using GenAI responsibly in education practice

GenAI has an enormous range of potential applications in teaching and learning both in the content we deliver and also in how we deliver or administer education. For example, AI might be used to support marking, curriculum design or develop content. Many University-supported tools now include AI-powered functionality, e.g. Blackboard, which can support curriculum and content design.

If you intend to begin experimenting with GenAI in your education practice, it is useful to bear in mind some ‘rules of engagement’ to ensure that you are responsible in your own AI usage.

- Consider where you share information. Use UoS supported tools like Microsoft Copilot or Blackboard which have institutional ‘guardrails’ ensuring input data does not train a model or get archived and shared. If you share student work in an open AI tool, like Claude, or ChatGPT, you are infringing the student’s copyright. Work submitted to Large Language Models (LLMs) can potentially be analysed, stored and used by others.

- Contextualise your use of AI within your teaching/assessment. How does your use of AI enable you to add value to your students’ learning experience? For example, does using AI for some tasks enable you to hold more in-person feedback sessions? Your use of AI should be adopted holistically alongside consideration of your learning outcomes and assessment approach.

- Be transparent with students over your use of AI. Talking to your students about how you are using AI to support teaching is an important aspect of ethical use of AI and role models an example of real-world AI-use. It is equally important to discuss with students why they should not use AI for certain tasks (e.g. to develop thinking skills or foundational knowledge).

- Keep ‘the human in the loop.’ AI-generated content should be checked and edited. GenAI is well-known for ‘hallucinations’ (making things up) and making incorrect connections while sounding 100% plausible. It can also augment or misunderstand pre-loaded information, so ensure that there is human oversight on any AI task.

- Keep learning about how AI impacts your discipline. AI is reshaping how our students discover, interact with and produce information. Consider what this means for academic study in your own discipline and the kinds of jobs your students will go into. Are new skills required? Do existing skills need to be reinforced and taught in a different way?

The GenAI working group’s SharePoint site has FAQs on using AI in education that you may find useful.

Bite-sized task

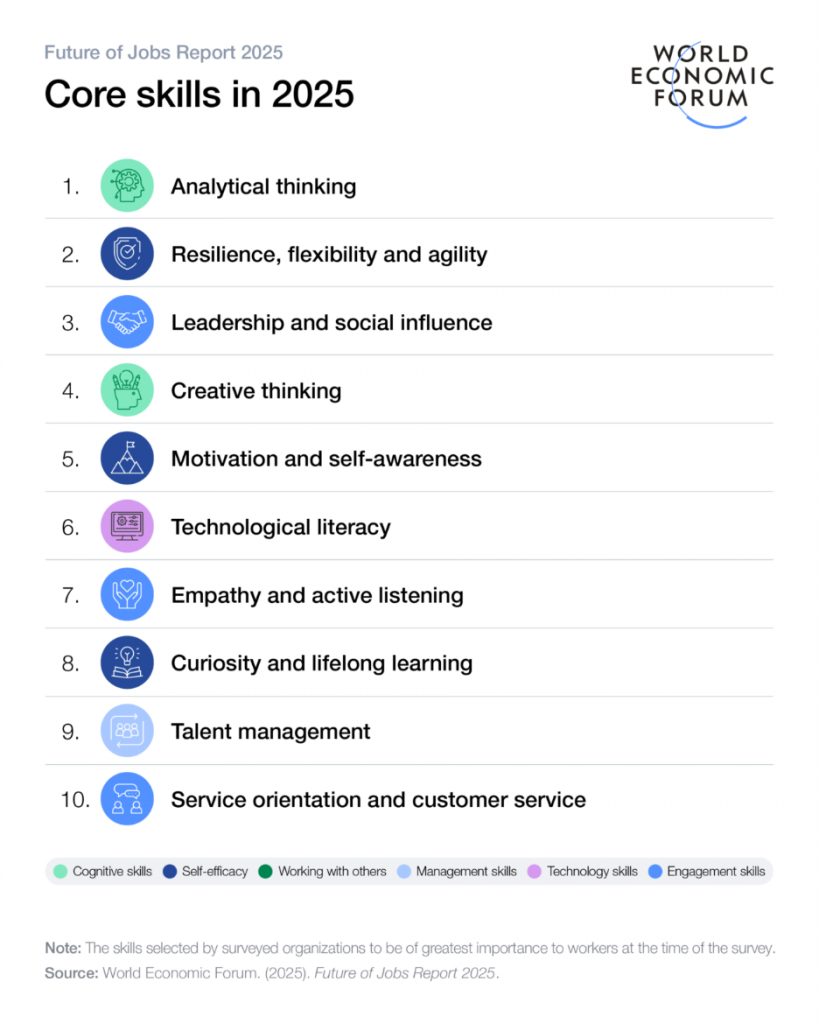

In this activity, you will look at some recent data from the World Economic Forum’s Future of jobs report and reflect on how this might relate to your own discipline and your teaching.

Step 1 – learn

The World Economic Forum produces an annual ‘Future of Jobs’ report. Look at these two infographics that come from the World Economic Forum Future of Jobs report, 2025. Follow the link to browse the full report, key findings, datasets and other infographics.

Graphic 1 (below) shows the skills that surveyed organizations felt to be of most importance to workers when the data was collected.

Graphic 2 (below) shows the skills that surveyed organizations felt to be increasing the most rapidly in importance by 2030.

Step 2 – do

Consider how this data might relate to academic study in your own discipline and to the kinds of jobs that your students might get post-graduation.

Think about these questions:

- How might this data relate to your own discipline?

- What aspects of your own teaching reinforce or develop these skills, as you may be unlikely to be teaching these skills directly?

- How far do you articulate to your students that these skills might be outcomes of their learning and your teaching?

- Does this change how you think about or articulate use of AI in your teaching?

Step 3 – reflect

What are your reflections on what these infographics might tell us, at this point in time? What might these reflections suggest about how we embed, include or frame the use of AI in education?

Join the conversation

Post your thoughts on the weekly Teams post to join the conversation

Further links

Generative AI working group - information and guidance for staff

An introduction to Generative AI practices workshop series recordings – CHEP recorded talks on AI

Digital Learning team guidance on using Copilot and building digital capabilities in AI

World Economic Forum Future of Jobs report 2025

OECD Future of Education and Skills 2030/40 – project and report

Contributor biography

Kate Borthwick is Professor of Digital Education in Languages, Cultures and Linguistics, in the Faculty of Arts and Humanities. She is the Lead for AI in education at the University and chair of the University Digital Education Advisory Group. She is Director of the University open online course programme and is an award-winning lecturer and learning designer.

© 2025. This work is openly licensed via CC BY-NC-SA